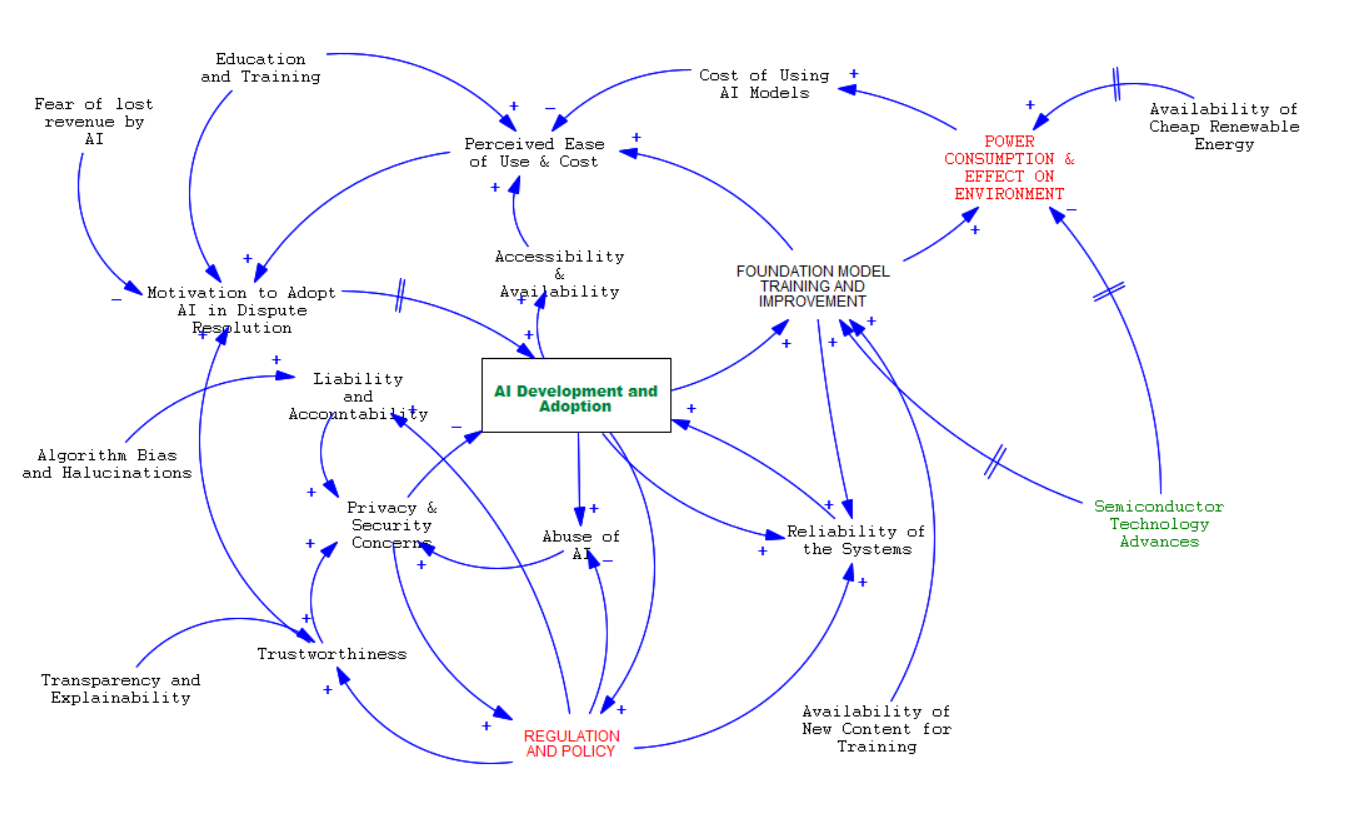

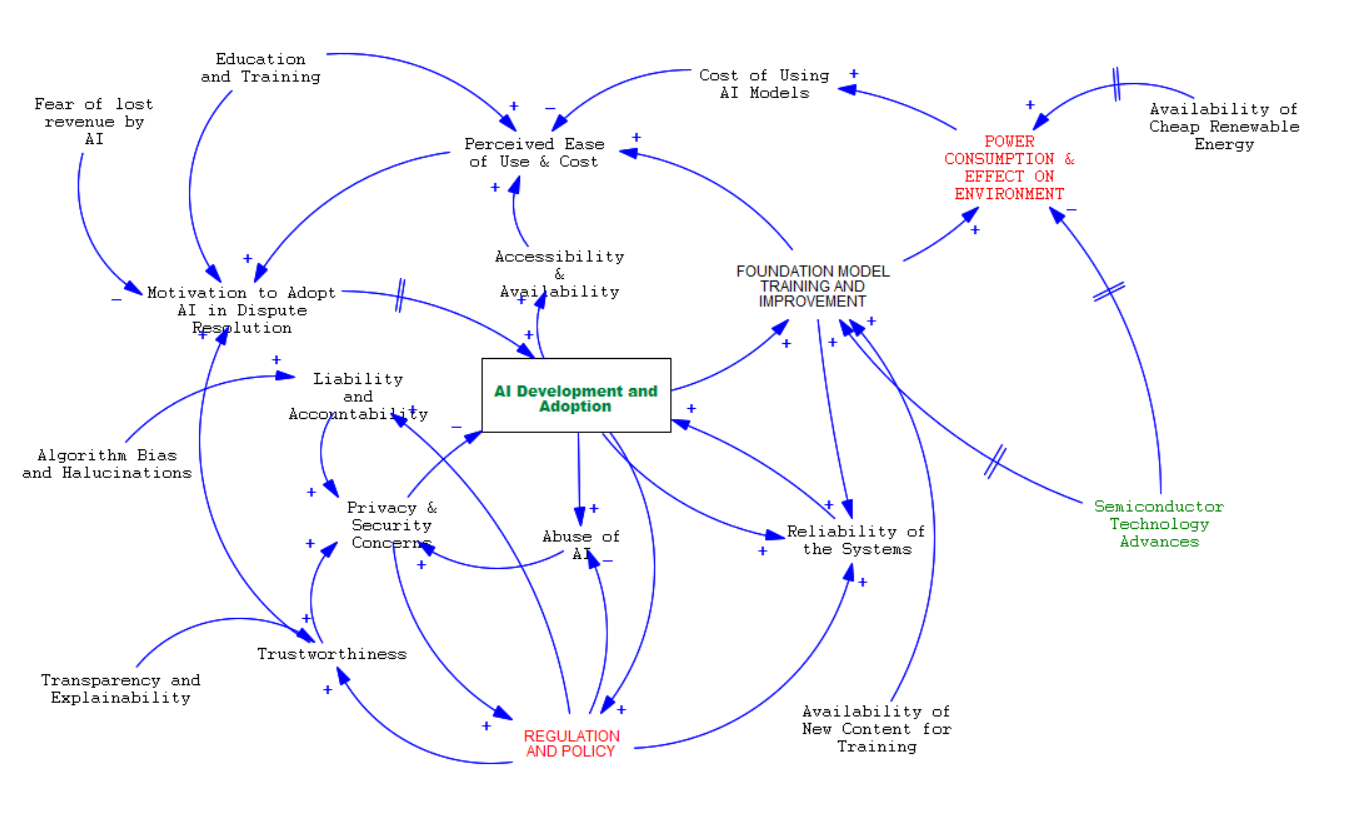

20 variables, 36 causal links, four feedback loops. Hover an arrow to highlight it; double-bars (‖) on an arrow indicate a delay.

View original Stella® diagram

Original Stella® model — Bob Bergman, AZ Decision Science.

A system-dynamics view of AI adoption that takes seriously what most adoption models leave out: the power consumption and environmental footprint of foundation-model training, the regulatory backpressure that follows from misuse, and the trust dynamics that quietly govern uptake. The diagram traces 20 variables through four interacting feedback loops.

20 variables, 36 causal links, four feedback loops. Hover an arrow to highlight it; double-bars (‖) on an arrow indicate a delay.

Original Stella® model — Bob Bergman, AZ Decision Science.

Each arrow shows the direction of causal influence. A + means the variables move in the same direction; a − means they move in opposite directions. Loops are either reinforcing (amplify behavior) or balancing (push toward equilibrium). Double-bars (‖) on an arrow indicate a delay — useful for arrows where the cause and effect take real-world time to play out (semiconductor advances, energy infrastructure, environmental impact).

"Adoption" debates often reduce to demand-side optimism (the R loop) versus regulation-side caution (the B1 loop). The B2 cost / power loop is what most stakeholders miss — and it's the one that quietly sets the ceiling on how fast adoption can scale. When you can see the structure, you can debate the policy lever, not just the headline.

Built during a system-dynamics modeling engagement focused on AI policy in dispute resolution. The same approach applies to any adoption-vs-regulation system — autonomous vehicles, healthcare AI, financial automation.

Adoption dynamics, policy resistance, capacity loops, growth-vs-regulation tradeoffs — if it has feedback, we can model it.